Ingress📜

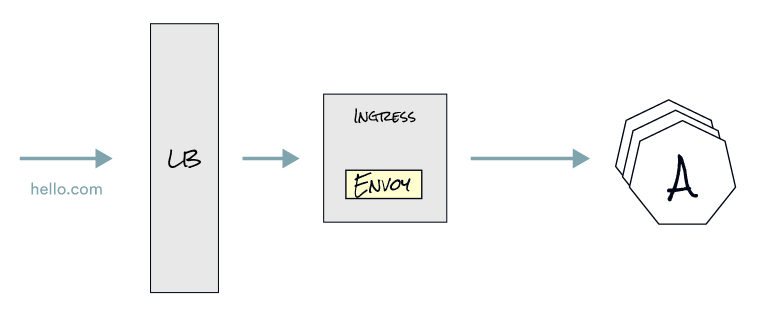

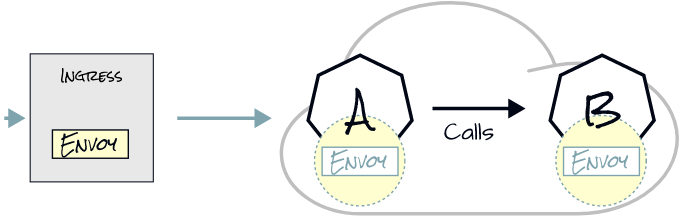

Returning to our example, Service A calling Service B, the same thing happens if an Ingress is involved in front. If we configure hello.com to point to the IP address of the load balancer, the Ingress Controller (in our case, Envoy proxy) will route requests for hello.com. The Ingress Controller routes the request to Service A based on routing rules. From there, Service A can call Service B.

Istio uses Ingress Gateways to configure load balancers executing at the edge of a service mesh. An Ingress Gateway allows you to define entry points into the mesh that all incoming traffic flows through.

We can configure an Ingress Gateway by using the Gateway Istio resource. You use a Gateway resource to manage inbound and outbound traffic for your mesh, letting you specify which traffic you want to enter or leave the mesh. Standalone Envoy proxies at the edge of the mesh use Gateway configurations instead of sidecar Envoy proxies alongside service workloads.

It is important to note that the Gateway resource doesn’t define routing rules or traffic shifting concerns. For this purpose, Istio’s API provides the VirtualService and DestinationRule resources that we’ll explain in detail in the traffic shifting chapter.

In summary, what can we say about Ingress?📜

- Ingress Pod runs in istio-system namespace.

- It is configured logically in Kubernetes with the

Gatewaycustom resource. - Routing is configured separately with

VirtualServiceandDestinationRuleresources.

Next📜

In the next lab, we expose the web-frontend using an Istio Ingress Gateway, which allows us to access this application on the web.